LowKey

Vocal training with real-time pitch detection

About LowKey

A vocal training platform with real-time pitch detection and visualization. Users upload songs, AI separates the vocals using Demucs, then singers practice with live feedback comparing their pitch to the reference melody. Built with React/Vite frontend and FastAPI/Celery backend.

The Challenge

Vocal training traditionally requires an in-person coach or expensive software. Existing apps either provide generic pitch feedback without reference melodies, or require pre-processed backing tracks. There was no solution that let singers upload any song and practice against the actual vocal melody.

Our Solution

We integrated Meta's Demucs AI model for vocal separation — users upload any song and the system isolates the vocal track automatically via Celery background jobs. The React frontend uses the Web Audio API for real-time pitch detection, rendering a 60fps canvas visualization that overlays the singer's pitch against the reference melody.

The Results

The architecture cleanly separates compute-heavy AI processing (FastAPI + Celery + Redis) from the real-time frontend. Canvas-based rendering maintains smooth 60fps even during live audio analysis. The system handles songs of any length without blocking the UI.

Key Features

AI Vocal Separation

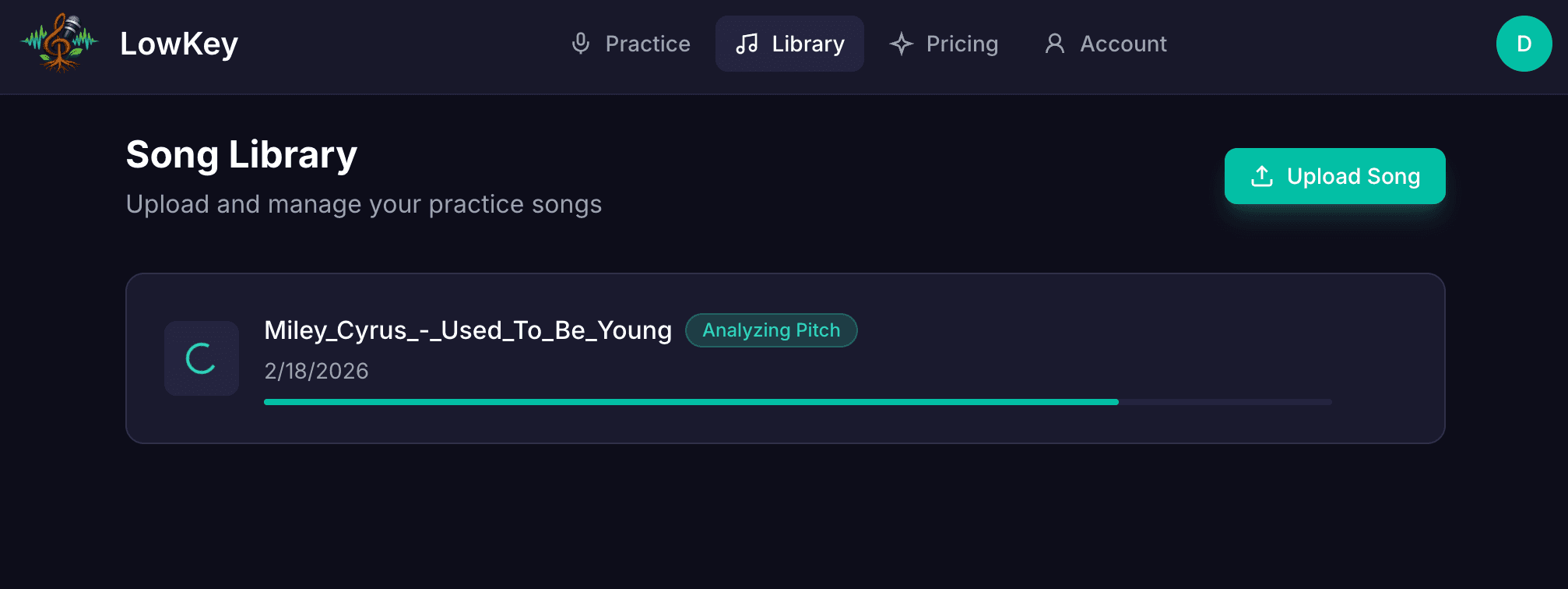

Upload any song and Demucs automatically isolates the vocal track for practice reference.

Real-Time Pitch Visualization

Canvas-rendered pitch graph at 60fps shows your voice overlaid on the reference melody in real time.

Web Audio API Integration

Browser-native microphone input with real-time FFT analysis for accurate pitch detection.

Background Job Processing

Celery workers handle Demucs processing asynchronously so the UI stays responsive during uploads.